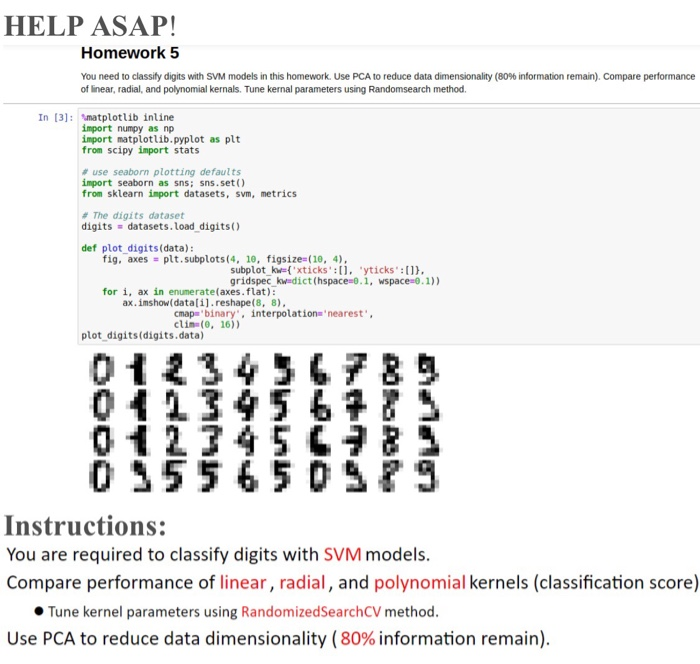

They are both classical linear dimensionality reduction methods that attempt to find linear combinations of features in the original high dimensional data matrix to construct meaningful representation of the dataset. If you'd like to preserve the original features to determine which ones explain the most variance for a given data set, see the SciKit Learn Feature Documentation. First, we'll start by setting up the necessary environment. Principal component analysis (PCA) and singular value decomposition (SVD) are commo n ly used dimensionality reduction approaches in exploratory data analysis (EDA) and Machine Learning. NOTE: PCA compresses the feature space so you will not be able to tell which variables explain the most variance because they have been transformed.

In order to perform PCA we need to do the following: PCA Steps Linear dimensionality reduction using Singular Value Decomposition of the data and keeping only the most significant singular vectors to project the data to a lower dimensional space. PCA allows us to determine which features capture similiar information and discard them to create a more parsimonious model. PCA allows us to quantify the trade-offs between the number of features we utilize and the total variance explained by the data. SciPy’s high level syntax makes it accessible and productive for programmers from any background or experience level. Enjoy the flexibility of Python with the speed of compiled code.

#SCIPY PCA PC#

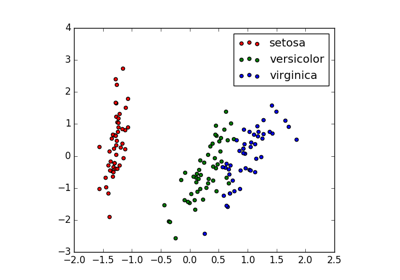

The first PC captures the largest variance in the data. SciPy wraps highly-optimized implementations written in low-level languages like Fortran, C, and C++. PCA uses "orthogonal linear transformation" to project the features of a data set onto a new coordinate system where the feature which explains the most variance is positioned at the first coordinate (thus becoming the first principal component). PCA aims to find linearly uncorrelated orthogonal axes, which are also known as principal components (PCs) in the m dimensional space to project the data points onto those PCs. PCA is typically employed prior to implementing a machine learning algorithm because it minimizes the number of variables used to explain the maximum amount of variance for a given data set. Principal component analysis is a technique used to reduce the dimensionality of a data set. Principal Component Analysis (PCA) in Python using Scikit-Learn